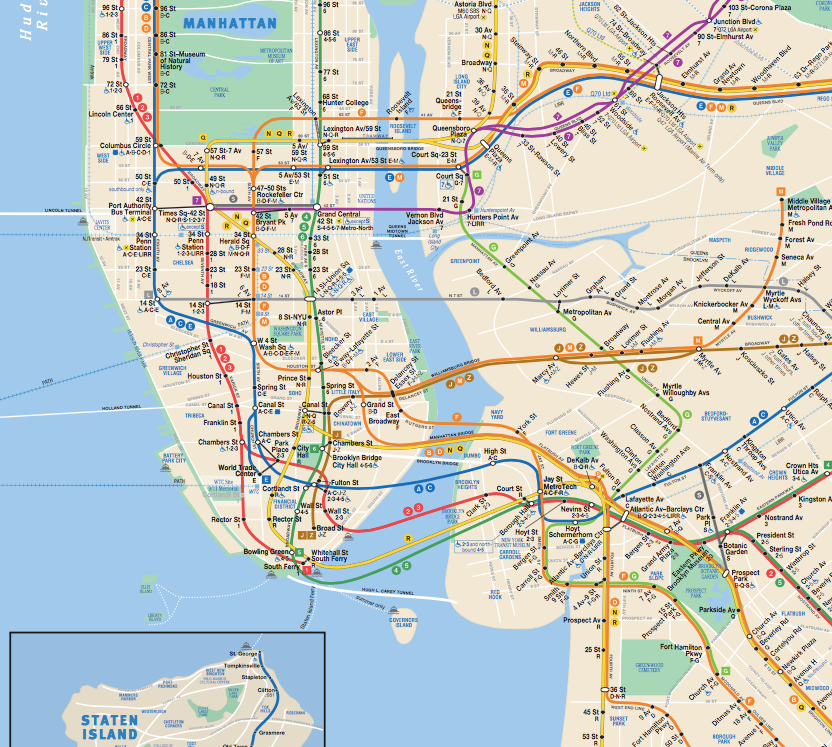

NYC Subway System:

Does Rain Affect Subway Ridership?

Do more people ride the subway when it's raining outside than when it isn't raining? The answer isn't as intuitive as you would think. This project involved wrangling actual NYC Subway data, then analyzing it using statistical methods and data visualization.

You can read my analysis here, or look at the code used to create it. This project was completed as part of the Udacity Data Analyst Nanodegree.

Skills used: Python, NumPy, Pandas, PandasSQL, SQL, ggplot, linear regression, gradient descent, MapReduce